November 20, 2023

End of the Journey

As a CEO, I make decisions daily - for users, for teams. But deciding to shut down the company? That was the hardest call of my life.

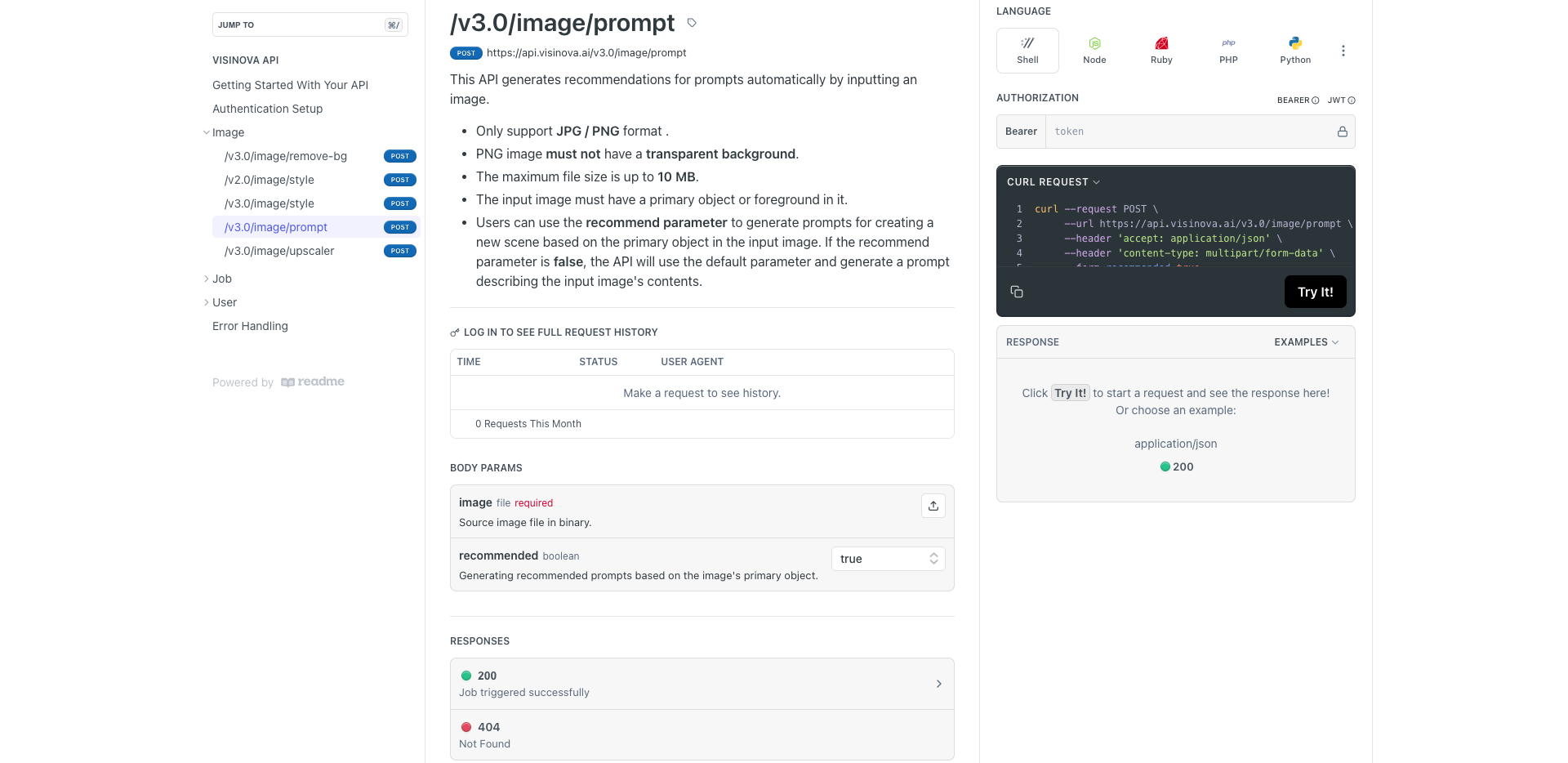

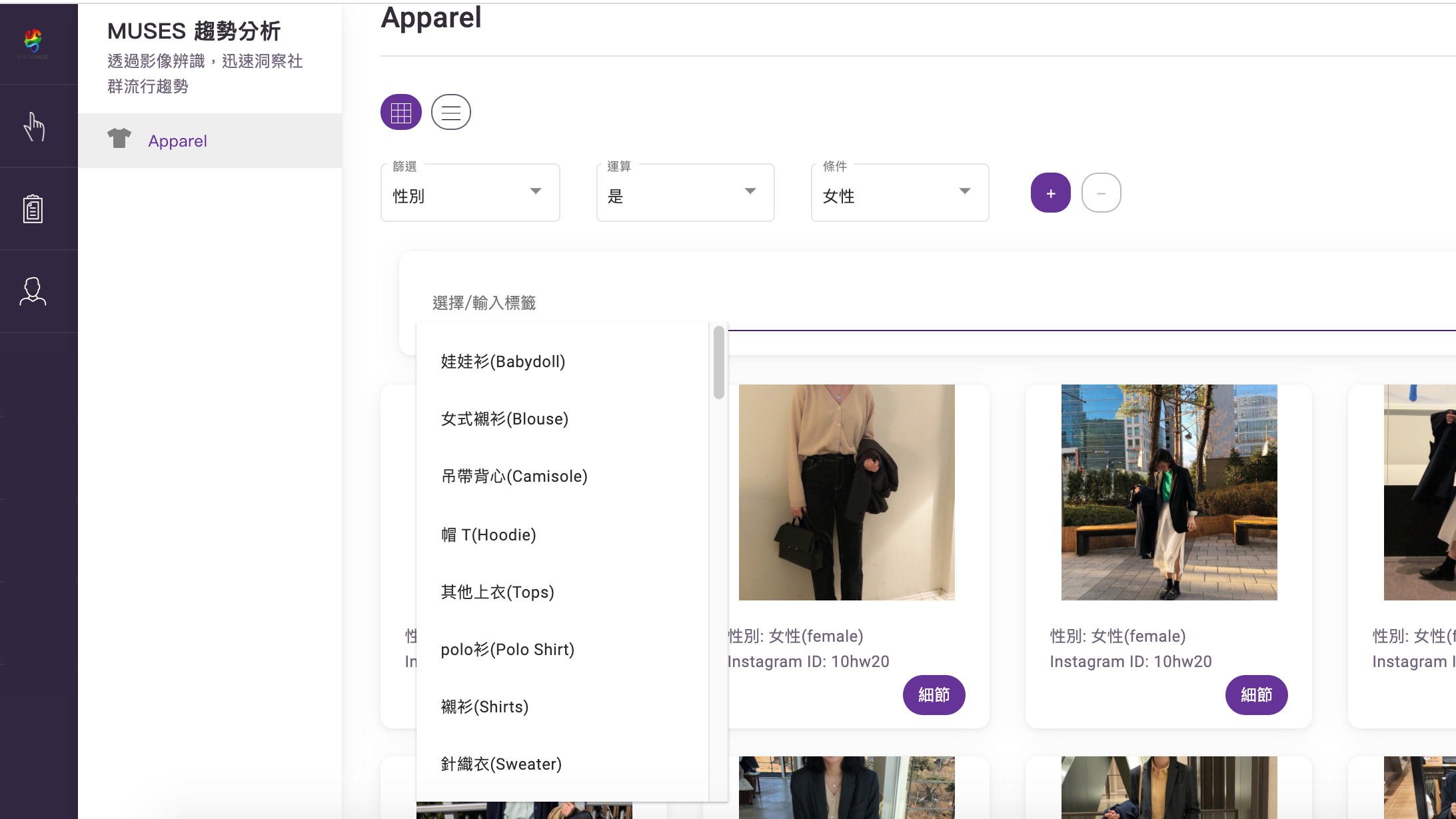

Throughout this journey, What Went Well was that we successfully applied computer vision (CV) technology across wildly diverse domains: B2B to B2C, edge computing to APIs. This gave us rare experience in developing products for the next AI era.

What Didn't Go Well was our fundraising. When you build a team capable of training models, meaning you're at the foundation layer, you need resources - not only people but also computing power. All of this requires significant capital.

What We've Learned can be summarized in three points:

First, pick the right market. This is the most crucial yet most challenging thing to do. Because the "right market" depends on your prediction of the future, and why you are obsessed with that future(your vision). It also requires co-founders who can attract exactly the right talent.

Second, how to go to the market? Go-to-Market ≠ “Build It and They’ll Come” Our early assumption: “Our tech is revolutionary – users will flock post-launch!” But the reality is "no exposure, no existence". Without users, you get no feedback. Without feedback, no growth. Launching isn't the finish line - it's barely step 0.5.

Lastly, find the right people to join you. Looking back on this journey, we assembled Taiwan's brightest in R&D, business and design. Other founders constantly asked us why our team members stayed for so long(with compensation lower than Silicon Valley packages).

From our perspective, culture is the thing founders most easily overlook. Sometimes, it is the culture that paves the path to your vision. Even now as ShowHue winds down, the culture we built persists in every member(even interns who stay for just a few months).

This is why we are journaling our story here. You now have the DNA; you can re-encode it to develop the next era of AI.

We sincere regards,

Co-founder & CEO, Hannie Liu